We may earn compensation from some listings on this page. Learn More

Are you also interested in finding out what does GPT stand for in chat gpt or gpt-3? Let’s find out its full form.

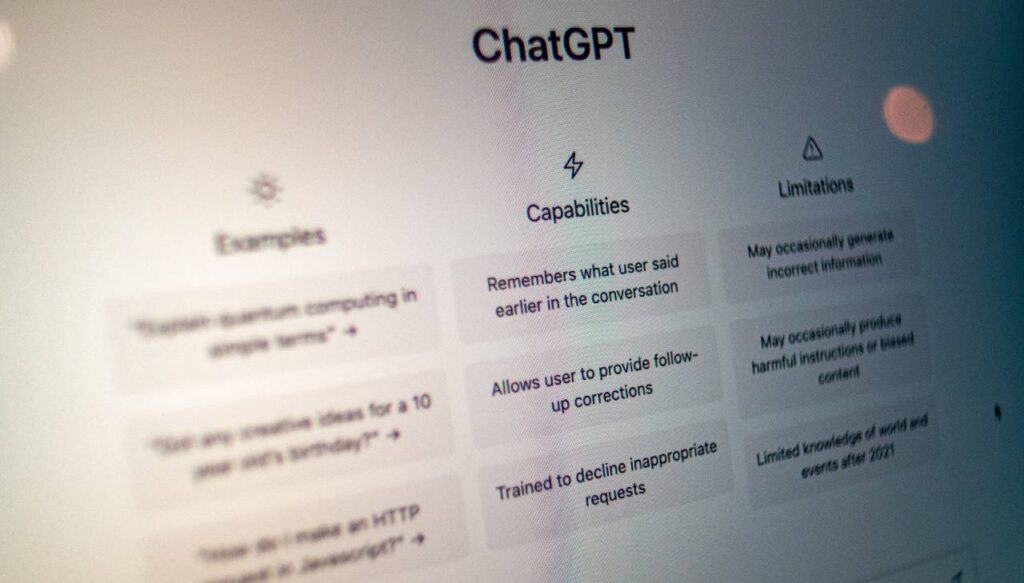

You must have seen OpenAI’s ChatGPT or other AI chatbots using GPT in their systems to provide natural language content. But do you actually know what GPT stands for in ChatGPT?

GPT stands for Generative Pre-training Transformer. It is a type of large language model (LLM) and a framework for generative artificial intelligence. The first GPT was released by OpenAI in 2018.

GPT is extremely vital in ChatGPT as it helps develop texts from datasets and provides outputs to users’ questions in a human-like form.

An initial training process took place to text corpus GPT and enable it to understand and learn to predict the next word in a passage. So, let’s take a look at GPT, what does GPT do, its advantages and disadvantages, and more.

Chat GPT stands for Chat Generative Pre-Trained Transformer and was developed by an AI research company, OpenAI, which is a program that can realistically write like a human. If you are still wondering who owns chat gpt then the quick answer is OpenAI, the San Francisco-based artificial intelligence firm, has developed Chat GPT.

OpenAI LP and its mother company OpenAI Inc both make up the for-profit company. As part of their research efforts, they have created tools like GPT-3 and DALL-E 2. These products are being used to carry out their underlying mission.

GPT is a Transformer-based architecture and training procedure for natural language processing tasks.

GPT models are pre-trained on massive amounts of data, such as books and web pages, to generate contextually relevant and semantically coherent language.

GPT is a language model created by OpenAI. This language model initially went through training to use the large corpus of textual data to generate various beneficial and relevant texts that appear to be written by a human.

A number of transformer architecture blocks were utilized for making GPT. In addition, GPT can be fine-tuned by other applications for several natural language processing tasks and features. If you are looking to automate your coding tasks this Coding with ChatGPT course is one to look for.

For example, language translations into various languages, text creation, and classification of texts. The “Pre-trained” used in GPT refers to the text corpus training process during the initial stage.

This is the stage in which the model learns how to predict the next word in the passage. This helps the model perform during the downstream tasks with limited task-specific information.

The primary goal of GPT is to generate human-like texts based on the input provided by the user. Users provide inputs to the language model in a textual manner with a sentence or question about different topics. GPT can develop different types of texts including coding, lyrics, scripts, and guitar tabs.

The transformer then processes the query provided by users and generates paragraph-based information by extracting the information from the dataset. A large corpus of textual data has been used to train these language models to help provide data for user input.

GPT is considered an evolutionary step in the world of Artificial intelligence. GPT helps generate human-like texts using methods like machine learning. OpenAI’s language model has improved with every upgrade from GPT 1 to GPT-4. So, let’s look at the evolution of GPT through the years.

GPT was originally presented to evolve as a high-powered language model that can foresee the following token in a row. This language model was released in 2018. It operates by using a single task-agnostic model with discriminative fine-tuning and generative pre-training. This model used about 117 million parameters. GPT gained a good amount of knowledge and learning through pre-training of a high amount of texts, which was beneficial in solving several tasks such as:

OpenAI released a second version of GPT, named GPT-2 in 2019. The primary objective of this language model was to recognize worlds in the vicinity. GPT-2 developed a new direction for text data as it was a transformer-based language and it used artificial intelligence to remodel the language processing capabilities.

About 1.2 billion parameters were used in GPT-2, along with 6 billion websites to target tasks to collect outbound links. It also included around 40 GB of text. Similar to the previous model, GPT-2 was also used for NLP tasks like:

The third version of GPT, GPT-3 introduced various beneficial features and capabilities. This language model was released in 2020 and can help intercept texts, answer complex queries, compose texts, and more. In 2021 it was used in the popular AI chatbot ChatGPT which gained massive success in the AI chatbot market.

This version of GPT used about 175 billion parameters to enable it to perform practically any given task effortlessly. In addition, GPT-3 was able to analyze several texts, words, and other data which helped focus on examples that can help generate unique output such as blogs, articles, and others.

Here are some of the capabilities of GPT-3:

GPT-4 is the recently launched language model in March 2023. This language model introduced new possibilities and features in the AI market by introducing Vision input. It allows users to provide input in text and image forms. GPT 3 had issues like speed, accuracy and even not working every now and then. GPT 4 has fixed these issue to some extent. If you are facing any issue like ChatGPT not working, head over to this post.

Parameters used in GPT-4 are still unknown, although it is expected to be higher in number than the previous language model GPT-3. It is multimodal that can produce human-level performances on several academic and professional benchmarks and has passed the bar exam and LSAT.

The evolution of GPT to GPT-4 is quite impressive and with this level of improvement, the GPT language model has the ability to reach even higher heights.

Language models like GPT are highly used by several businesses and organizations to increase their productivity and generate benefits in content creation, coding, and more.

Here are some of the well-known real-world applications that use GPT:

Applications like Google Assistant, Apple Siri, Be My Eyes, and more are using GPT to help improve their language abilities and provide human-like responses that are relevant and accurate. This works almost like a virtual assistant and helps users manage their tasks such as scheduling meetings, planning the day, reminding tasks, and more.

GPT language models are highly used by companies such as H&M, Uber, and more to help provide faster answers and help access maximum productivity in customer service.

GPT helps users understand and respond to customers’ queries quickly in a human-like form and help provide customer satisfaction, reducing the work tasks for customer service representatives.

Major companies use GPT on a large scale to develop high-quality content for their web pages, social media channels, blogs, articles, and more to enhance their media and generate good traffic. This helps companies generate content at a faster pace and help save time and resources by improving the overall quality and generating unique ideas for their content.

Here are some of the advantages of GPT in Chat:

Now, we have seen the advantages provided by GPT in Chat. Let’s take a look at its limitations:

GPT Technology has enhanced Artificial intelligence with its capabilities. GPT has significantly impacted various sectors such as business, logistics, social media, healthcare, and more.

Although GPT does contain a few limitations, the future of GPT Technology still looks quite prominent. With the introduction of Vision Input and its integration with Be My Eyes, we can expect major developments and improvements in GPT Technology.

GPT has changed the Artificial intelligence industry completely. With every new upgrade, GPT has showcased improvement and development in the language model. Especially after the introduction of GPT-3 with ChatGPT, people at a large scale were able to generate human-like content, coding, translation in different languages, and more thanks to GPT.